Data Classification: Nearest Neighbour and Linear Classifiers

This post covers my key takeaways from Stanford's CS231n (2016) Lecture 2 by Andrej Karpathy. The lecture focuses on data classification strategies, specifically comparing the Nearest Neighbour approach to Linear Classification. Link: CS231n 2016 Lecture 2.

The Nearest Neighbour Approach

One of the earliest approaches to classification was the Nearest Neighbour algorithm. This method classifies an image by measuring its distance to all images in the training set and finding the closest match.

But how do we compute this distance metric? The simplest method is the L1 Distance (also known as Manhattan distance).

The L1 distance calculates the absolute difference between the individual pixel values of two images and sums them all up.

Implementation in Python

In Python, the nearest neighbour classifier essentially "memorises" the training data. For prediction, it loops over all the test images, calculates their L1 distances against the training data, finds the lowest distance index, and returns the predicted label.

# Source: https://cs231n.github.io/classification/

import numpy as np

class NearestNeighbor(object):

def __init__(self):

pass

def train(self, X, y):

""" X is N x D where each row is an example. Y is 1-dimension of size N """

# the nearest neighbor classifier simply remembers all the training data

self.Xtr = X

self.ytr = y

def predict(self, X):

""" X is N x D where each row is an example we wish to predict label for """

num_test = X.shape[0]

# lets make sure that the output type matches the input type

Ypred = np.zeros(num_test, dtype = self.ytr.dtype)

# loop over all test rows

for i in range(num_test):

# find the nearest training image to the i'th test image

# using the L1 distance (sum of absolute value differences)

distances = np.sum(np.abs(self.Xtr - X[i,:]), axis = 1)

min_index = np.argmin(distances) # get the index with smallest distance

Ypred[i] = self.ytr[min_index] # predict the label of the nearest example

return Ypred

While this works for smaller datasets, it becomes highly computationally expensive as the test size increases. Modern machine learning has shifted away from this paradigm; today, we prefer models that spend significant computing power upfront during the training phase so that the testing phase is highly efficient.

K-Nearest Neighbours (KNN)

Moving from a traditional Nearest Neighbour approach to a k-Nearest Neighbour (KNN) approach introduces a smoother decision boundary. Instead of just taking the single closest point, KNN looks at the k nearest points and performs a majority vote. For example, if a test point lands near one red point but four green points, KNN will classify it as green.

Although KNN is a great fundamental algorithm, it is generally ineffective for complex image classification tasks.

Linear Classification

To solve the shortcomings of KNN, we introduce Linear Classification, a parametric approach.

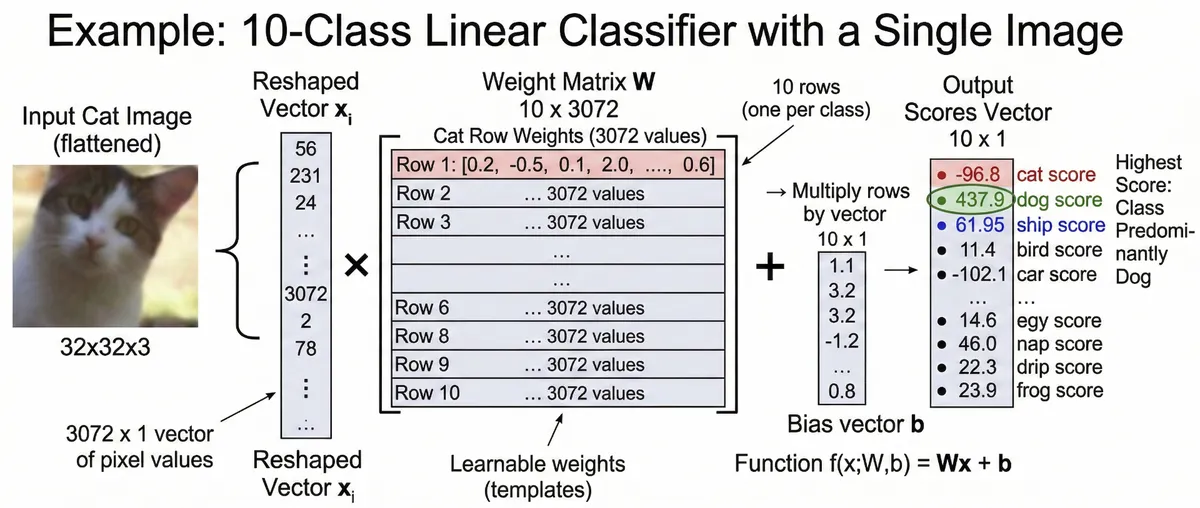

Here is how a linear classifier evaluates an image of a cat:

Flattening: The image's pixel values are extracted and flattened into a single column vector.

Weights: This vector is multiplied by a Weight Matrix (which contains the parameters the model has learned).

Bias: A bias vector is added to the result to provide a baseline offset for each class.

Output: The final result is a vector of scores for each category. The highest score determines the predicted class.

The Downsides of Linear Classifiers

A linear classifier essentially learns a single spatial "template" for each class based on colour values and basic shapes. Because it relies so heavily on global RGB colour distributions, misclassification can easily occur. For instance, if a picture of a car and a picture of a frog share a similar green colour palette, the classifier might get confused. Neural networks eventually solve this by learning hierarchical, complex features rather than just a single, flat colour template.